FRI Image & Acoustic Signals Analysis Research Stream

See, Hear, Understand—Teaching Machines to Perceive the World Like We Humans Do

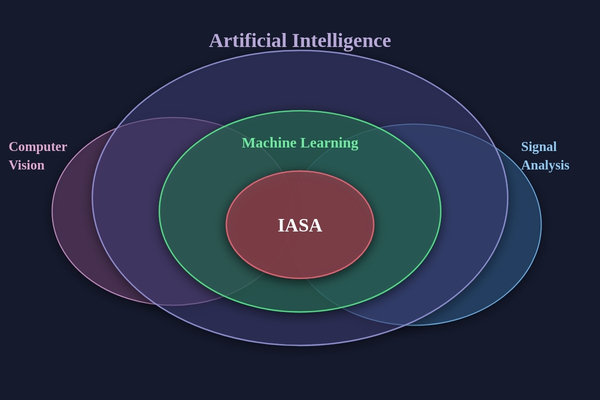

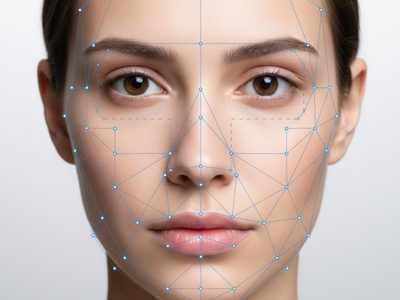

Image & Acoustic Signals Analysis (IASA) uses state-of-the-art equipment and techniques to analyze digitized signals, for example, for facial and speech recognition. The research carried out by first-year students in the IASA research stream at Binghamton University addresses a variety of problems ranging from autism classification through gaze, automatic sign language recognition through gesture recognition, and facial expression recognition through state of the art techniques.

IASA research intersects the traditional disciplines of Computer Science, Computer Engineering and Electrical Engineering. FRI IASA students tackle state of the art questions related to biometrics, human-computer interaction and robotics. The questions answered here will help give people a better quality of life, increase communication and make the world a safer place to live.

Research Themes

Privacy and Anonymization

Deep Fakes

AI for Good

Biological Signal Analysis

Synthetic Media and Image Generation

Facial Expression Recognition

Research Educator

Umur Ciftci

Image and Acoustic Signals Analysis, Research Assistant Professor

Research Techniques

Core Skills

Computer Vision

- Image Analysis

- Video Analysis

- Object Recognition

Artificial Intelligence

- Deep Learning

- Generative AI

- AI Agents

Human Computer Interaction

- Multimodal interfaces

- AI for good

- Biofeedback systems

Tools

Programming

- Python

Deep Learning

- PyTorch

Computer Vision

- OpenCV

Research Projects

-

Cohort 11 (2024-2025)

- AuraCity: Multi-agent system for complex task decomposition

- Dino-tective: Using DinoV3 to detect AI generated images

- MultEmotion: Late temporal fusion of EEG, facial expression, and heart rate for multimodal emotion recognition

- YANA: Your ASL native assistant

-

Cohort 10 (2023-2024)

- Automated vehicle identification using traffic infrastructure

- Built DIFFerent: changing the seasons of images using diffusion

- Fantastic fashion: a blend of technology and style

- D³: a dynamic deepfake dataset

- Synthesized satellite imagery: how fine tuning improves diffusion model quality

-

Cohort 9 (2022-2023)

- Automatic strike zone detection in baseball

- Black-box adversarial face transformation network

- Investigation of racial bias in facial recognition algorithms

- Unveiling the digital masquerade: Techniques in deepfake detection & sourcing

-

Cohort 8 (2021-2022)

- CopyMarth: replicating player behavior in Super Smash Brothers Melee

- Generating NeRF-based high fidelity head portraits

- Text-to-image building facade synthesis

- Transfering 2D garments onto 3D models using a clothing-segmentation

- Utilizing deepfakes to anonymize children online

-

Cohort 7 (2020-2021)

- Auto-generating commentary for esports

- Deepfake video detection by analyzing facial landmark locations

- Evaluating the impact of external stimuli in human-robot interaction

- Integrating deep q-learning with learning from demonstration to solve Atari games

- Machine learning and mahjong: an exploration of ai and human robot interaction (HRI)

- Using facial mimicry to determine power and status differentials in group meetings over virtual platforms

-

Cohort 6 (2019-2020)

- A benchmark dataset for bluff detection in poker videos using facial analysis

- Human motion synthesis to generate dance movements using neural networks

- Randomized and realistic 3D avatar generation using generative adversarial networks

- Robot navigation and object detection

- Simulation of a robotic arm to assemble a tower of unique objects

-

Cohort 5 (2018-2019)

- A Simplified Approach for Falsified Video Detection

- Assigning Autonomous 2D Navigation Goals Utilizing Eye Gaze

- Fatigue Detection Model Using Deep and Auxiliary Facial Feature Analysis

- Find the Litter: a semi-supervised machine learning method for automatic litter detection

- Multimodality Based Facial Expression Recognition

-

Cohort 4 (2017-2018)

- Extracting and Applying Gaze Data for Gaze Pattern Identification

- Holistic Identification of Scene Text with a General Image Classification CNN

- Sorting Recyclable Waste to Prevent Contamination Using a Convolutional Neural Network

-

Cohort 3 (2016-2017)

- 3D Object Detection for Visual Impairment

- Using Artificial Occlusion to Facilitate Low-Resource Facial Recognition on Occluded Images

- Gaze Patterns of Location Recognition/Non-Recognition

- Facing Kinect Sensors to Differentiate Biological Gender Unobtrusively through Gait Detection

-

Cohort 2 (2015-2016)

- Autism classification through gaze

- Gesture recognition for automatic sign language interpretation

- Pose estimation for automated control

Research Stream Collaborators

Research Interests

Scott A. Craver

Associate Professor; Undergraduate Director

Research Interests

Research Interests